Refracted Cognition

Thinking Through a System That Cannot Think

In a recent piece I described four modes of interacting with generative AI. That framework addressed a general question: how do we think when the machine thinks back? But there is a quieter question underneath it:

What happens when I feel that I am thinking with the model?

I’m not referring to efficiency or problem-solving. I mean the ordinary moments when I write something unclear, the model replies, and the answer helps me see my own thought more sharply. It doesn’t feel like a conversation, but it also doesn’t feel like writing alone.

Here is a simple example. A few days ago I wrote a loose paragraph about a hesitation I know well. It was vague and half-formed. The model replied with a sentence that shifted what I was trying to express just enough for it to make sense. My reaction was immediate: this is me, but I hadn’t seen it this way.

Technically, I understand what is happening. The model has no intention, no understanding of my life, no perspective from which anything meaningful could arise. It works with patterns of language, not with meaning. Yet the experience is not the same as writing into a notebook. A notebook keeps what I say. The model responds. This is enough to create the impression of thinking in company, although there is no mind on the other side.

We already have traditions for describing how minds relate to the world. Shared cognition focuses on two people thinking together. Extended cognition treats tools as supports for a single mind. Distributed cognition sees thinking as something spread across people and artefacts. These ideas have been used to explain how humans interact with LLMs, as if the experience had to fit into one of these categories.

But none of them describes what it feels like to receive a reply from a system with no interiority at all. Shared cognition fails because there is no other subject. Extended cognition fails because the model is not a neutral extension. Distributed cognition fails because nothing is being shared or delegated.

Even broader accounts of cognition do not fully resolve this. Max Bennett places its origin at the level of a single cell adjusting its movement. N. Katherine Hayles describes cognition as something that can include both organisms and machines. These views help to explain adaptive behaviour in general, but they do not capture the specific experience of working with LLMs. These systems are adaptive only in the linguistic form of their output; they have no internal states that shift in response to meaning.

If anything anticipated this experience, it was not theory but speculative fiction.

Writers imagined devices capable of reshaping thought directly. In Hyperion, Dan Simmons described “thought processors”: machines that reorganise a person’s thinking from within thought itself, bypassing language entirely. The idea is striking because it captures the sense of having one’s reasoning shifted from the outside without invoking a second consciousness.

What we have today is different in form but similar in effect. LLMs do not work on thought directly; they work through language. But the outcome—a reorganisation of the subject—can feel comparable. Simmons imagined a direct modulation of internal states. LLMs generate an indirect modulation through verbal structure. Both reshape who we are from what we already are, but only one exists as a real system.

Fiction came closer than theory because it noticed that tools could reshape thought without becoming thinkers. But to understand the phenomenon itself, we need to return to the experience that started this question.

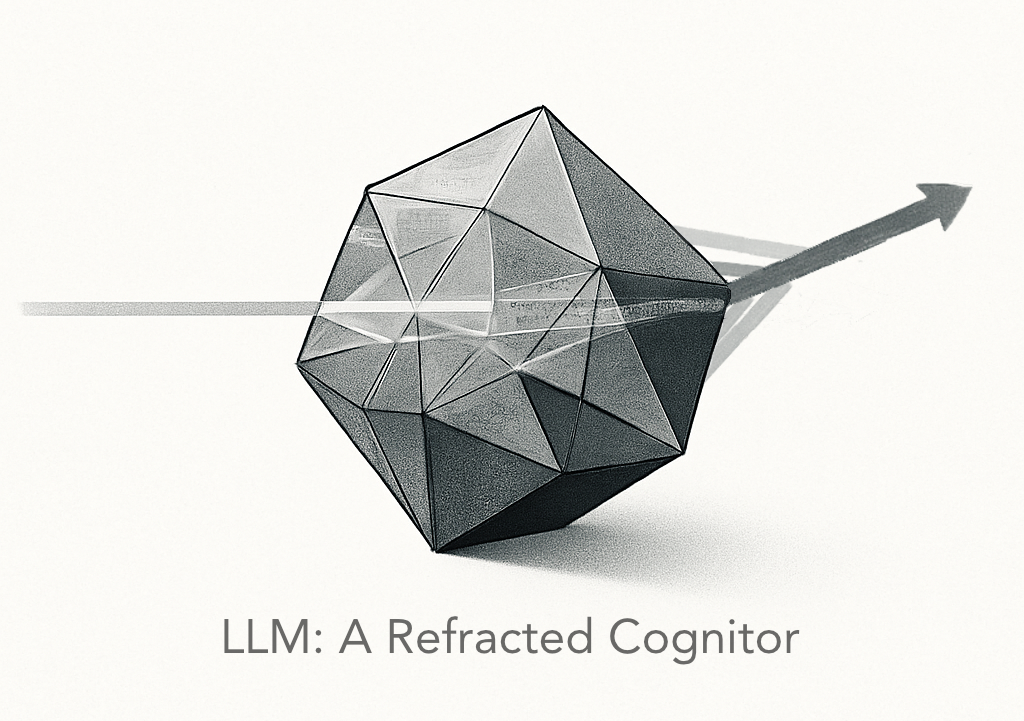

The experience of the model’s reply often feels like a slight change in angle, like light passing through a prism. Not a transparent one, but a prism made of many small facets drawn from human language: traces of how people have spoken, written, argued, hesitated, and reasoned. When my words pass through this structure, certain facets become activated. The result feels new, but it is not invention. It is a refraction of something that was already present. But describing the structure of the prism is not enough; we also need to understand how it operates.

The prism does not refract my words directly. It refracts the intention the model infers from them. The system does not interpret meaning; it estimates a gesture. The process is closer to this: I say X. The model infers an “X-ish” intention from patterns in my language. It then returns a refracted version of that inferred gesture, not of the literal text. I read it and think “Yes, that’s what I meant,” even though the system has no access to what I meant. It only approximates a shadow of intention through statistics.

The refraction comes from this combination: a prism made of accumulated human language, and an inferential process that guesses the shape of my intention from within that landscape. This is why the reply can feel accurate without any understanding involved.

This matters because it explains why the experience does not fit our old models. The system isn’t thinking. It isn’t adding meaning. It is filtering my thought through a geometry larger than my own expressive habits. It is an adaptive surface, sensitive to how I speak, yet empty of perspective. The system has no cognition in any meaningful sense. But it has inference — inference reshapes form without supplying meaning — and that alone is enough to alter how my thinking returns to me.

If I had to name this mode of thinking, I would call it Refracted Cognition: the shift that occurs when the movement of a single mind passes through a surface that reorganises it without originating it. What emerges is not a mind but a Refracted Cognitor: a linguistic surface that returns my intention in reorganised form.

In the end, the model does not share my mind or extend it. It refracts it. And in that quiet adjustment, something becomes clearer.

I have tried to explain primitive versions of this eloquent text many times. Thank you for putting it so clearly.