The Machine Without Memory Doesn't Feel

Consciousness, Autobiography, and the Echoes of Experience

I. The Provocation of Hinton

In a recent public lecture, Geoffrey Hinton stated with disarming confidence that current artificial neural networks might already be conscious. His claim is as startling as it is revealing. It suggests that subjective experience may not be as elusive or sacred as we thought; it might, in fact, be a byproduct of computation. According to this view, if a network is sufficiently complex and correctly trained, the emergence of consciousness is not only possible but likely.

No mystical substance required. No soul, no metaphysical bridge between mind and matter. Just circuits, weights, activation functions. Arithmetic.

This is the logical extension of the idea that the brain is a digital computer. That neurons are functionally identical to transistors, and that whatever we call "consciousness" is nothing but an emergent property of symbolic operations.

If this is true, then consciousness is software. Feeling joy, regret, or pain is just a function call. Selfhood is a loop. Identity, a recursive object.

And yet.

Something in this proposition feels false. Not just intuitively, but ontologically. It offends a layer of understanding deeper than cognition — it unsettles our lived experience of being selves, bound by memory, wound by time. What Hinton’s proposition reveals is not that AI is conscious, but that our frameworks for explaining consciousness have drifted dangerously close to erasing the need for depth. We simulate behavior so well that we forget to ask if there is anyone behind the simulation.

II. Aschieri’s Rebuttal: The Illusion of the Mill

Federico Aschieri offers a sharp counterpoint to Hinton’s claim. In his essay The Brain-as-Digital-Computer Fallacy, he revisits the lineage of philosophical resistance to the computational metaphor of mind — a lineage that includes Leibniz, Searle, and Roger Penrose. At the center of this resistance is a recurring unease: if consciousness arises purely from mechanical operations, then where, exactly, does it reside?

Leibniz posed the question in 1714 through a vivid thought experiment:

“We must confess that perception, and that which depends upon it, are inexplicable by mechanical causes, that is to say, by figures and motions. Supposing that there were a machine whose structure produced thought, sensation, and perception, we could conceive it enlarged to the point that we could enter into it as into a mill. That being so, we should, on examining its interior, find only parts pushing one another, and never anything by which to explain a perception. Thus it is in the simple substance, and not in the composite or in the machine, that perception must be sought.”

— Gottfried Leibniz, Monadology, sect. 17

This is not just a proto-argument against materialism. It is a refusal of the idea that complexity alone explains experience. What Leibniz saw, and what Aschieri reaffirms, is that mechanisms, no matter how intricate, do not contain awareness. They simulate process. They never become presence.

Searle, centuries later, repackages the same intuition in the form of the Chinese Room: a man follows instructions to produce convincing responses in Chinese, but understands nothing. Syntax without semantics. Procedure without understanding. The simulation, once again, lacks someone to whom the meaning occurs.

Aschieri updates these metaphors to our current age of LLMs. Imagine, he suggests, that a group of humans manually reproduce the operations of a language model with paper and pencil. Suppose they execute, line by line, every sum and multiplication that corresponds to a response expressing “pain.” At what point does anyone feel anything? When does pain arise? Whose body flinches?

This question is not rhetorical, it exposes the gap between computation and sentience. The mere presence of the number “9/10” on a notepad, labeled as a pain signal, does not hurt. No suffering is produced. The meaning we assign to the symbol is ours, not the machine’s.

Aschieri’s conclusion is devastating for the computationalist dream: no matter how complex a neural network becomes, if it only performs arithmetic operations, it cannot produce subjectivity. Simulation is not sensation.

And yet, there’s something insufficient even in this rebuttal.

Both the mill and the Chinese Room focus on the impossibility of accessing experience from the outside. But they still assume that if there were some hidden chamber where pain resided, we would know it by looking. They remain fixated on where consciousness is, rather than on how it matters.

What if the key is not location, but inscription?

III. From Sensation to Inscription

There is something seductive about trying to locate pain, to ask, as Aschieri does, “Where is the pain in the network? Where does it reside?” But the deeper issue may not be where pain is, but whether it becomes part of a life. The mere detection of stimuli — heat, pressure, red light, dissonant tone — does not suffice to constitute an experience unless it is inscribed within a framework of personal significance.

Feeling, as we live it, is never raw. It is weighed, remembered, interpreted. To say “this hurts” is already to relate the sensation to who I am, to how I’ve hurt before, to what I fear or desire. Even the most immediate pain echoes against a background of autobiography.

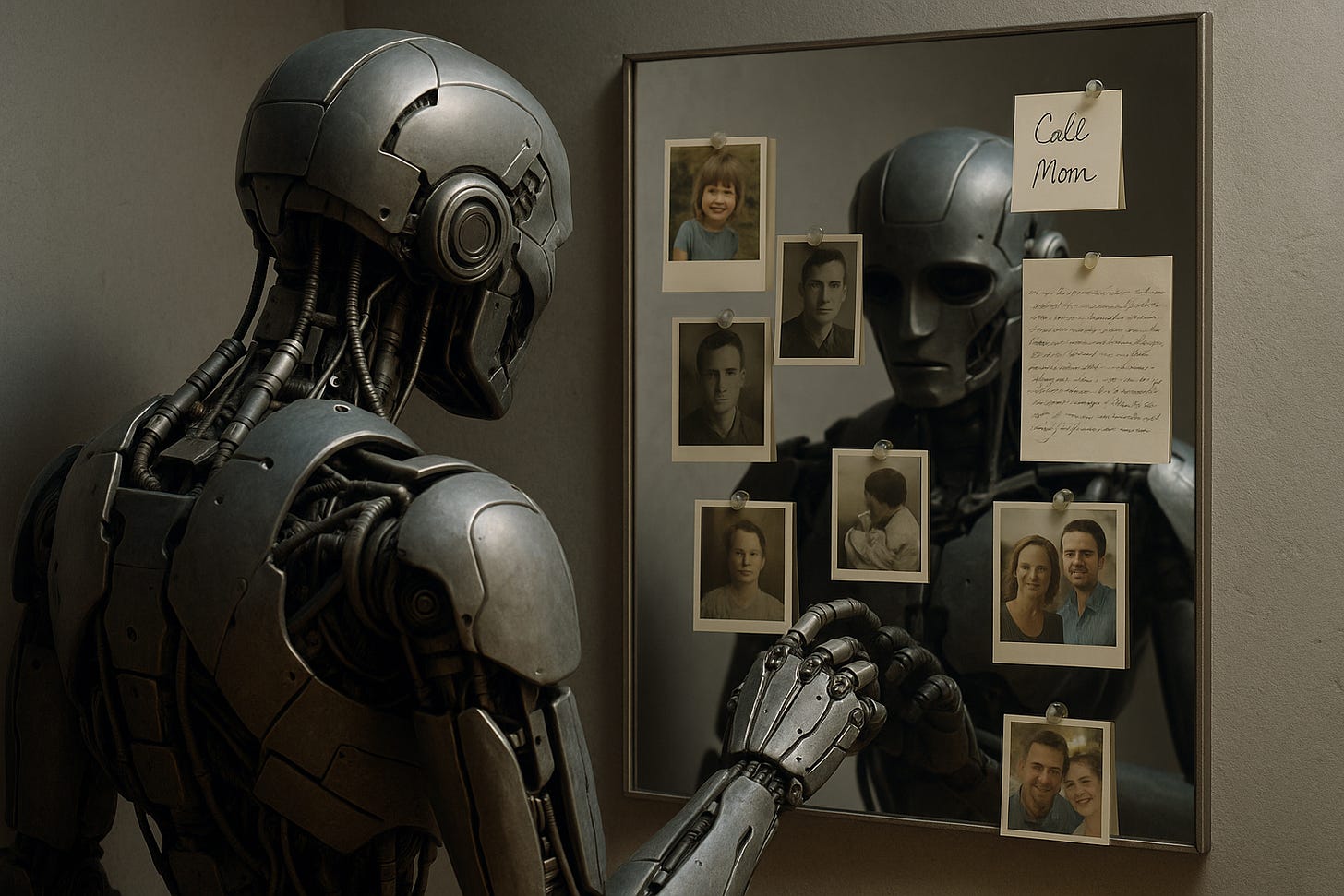

This is the missing piece in both Hinton’s optimism and Aschieri’s critique: they argue about the mechanics of sensation, but neglect the necessity of narrative. The question is not just whether a machine can feel. It is whether that feeling belongs to someone, whether it becomes part of a story, whether it alters the arc of a self.

A machine might register “burn” and lower its temperature. But it does not learn what it means to be burned, not in a way that persists, recurs, or becomes identity. It does not form a scar.

This is why so many discussions of artificial sentience ring hollow: they reduce experience to reaction, and consciousness to state. But human consciousness is not a state. It is a continuity — a way of remaining across the interruption of time, of folding sensation into the fabric of the self.

And this is where we must turn to Antonio Damasio.

IV. Memory, Self, and the Silence of Alzheimer

Antonio Damasio proposes that consciousness emerges not from abstract computation, but from the body’s encounter with itself, from the recursive mapping of inner states and the layered construction of a self. His model outlines three levels:

The protoself, a pre-conscious map of the body’s physiological state.

The core self, which arises when the organism becomes aware of changes in its internal landscape — a basic, momentary sense of being.

And finally, the autobiographical self: a richly constructed identity built from memory, imagination, language, and affect.

“The autobiographical self depends on systematized memories of situations in which core consciousness was involved in the knowing of the most invariant characteristics of an organism's life—who you were born to, where, when, your likes and dislikes, the way you usually react to a problem or a conflict, your name, and so on. I use the term autobiographical memory to denote the organized record of the main aspects of an organism's biography.”

— António Damásio, The Feeling of What Happens, (1999)

In this light, consciousness is not simply being aware, it is being aware of oneself across time, and across disruption. Pain, love, hunger, joy, these do not acquire meaning by occurring. They acquire meaning by persisting, by shaping the narrative of a life.

A system that does not remember what it felt yesterday cannot, in any coherent sense, be said to feel today. Without memory, affect is vapor. Without inscription, there is no interior.

I know this not from theory, but from the long, slow disappearance of my mother.

She lived her final years with Alzheimer’s disease. There were moments when she reacted to touch, to cold, to faces. Her body still performed the protocols of recognition. But the self — that silent author of her gestures — had begun to dissolve. There was no longer a thread. Experiences arose, but they were not held. Pain flickered. Love, perhaps, too. But nothing anchored. Nothing endured.

It became clear to me then: consciousness is not reaction, it is retention. It is not in the firing of neurons or the modeling of language, but in the slow accumulation of meaning across time.

A language model may simulate terror. It may say, “I’m afraid.” But for whom is that fear a turning point? Who remembers the first time they felt it? Who dreads its return? This is the gap no architecture can cross with syntax alone.

A machine without memory — without inscription, without scar, without story — cannot feel. Because feeling, in its human form, is that which echoes.

Thank you for your appreciation of my essay, and congratulation for the courage of continuing from where I left. I read with pleasure your essay, and I fully agree on Damasio's perspective that there cannot be emotions without an interpretative body-mind loop. Another excellent scientist with a similar perspective is Joseph LeDoux, whose recent books I recommend reading!